Kubeadm is a Kubernetes distribution that provides all customization options that you can think of: container runtime, container network interface, cluster storage and ingress. You can configure all these aspects of your cluster but have to understand the individual options and their setup as well. For a complete overview about this remarkable distribution, see my previous article.

This article is a tutorial about creating a 3 node Kubernetes cluster. One node will be the control plane node, and 2 others will be the worker nodes. The particular Kubernetes components are etcd for the cluster state, container-d as the CRI, and calico as the CNI. Let’s get started.

The complete project source code is also available at my Github repository kubernetes-kubeadm-terraform.

Prerequisites

To follow along, you will need a Hetzner Cloud account. Then, get an access token following the official documentation.

Also, choose appropriate server types for your setup. For this demonstration, I will use the following:

- 1x CX11 node (1 Intel CPU, 2GB RAM, 40GB SSD)

- 2x CX21 nodes (2 Intel CPU, 4GB RAM, 40GB SSD)

You also need to install Terraform, head over to terraform.io and grab the binary suitable for your computer.

Project Structure

With the following command, you will create a file structure that conforms with Terraform best practices.

TF_PROJECT_NAME=kops_github

mkdir $TF_PROJECT_NAME

tffiles=('main' 'variables' 'providers' 'versions' 'outputs'); for file in "${tffiles[@]}" ; do touch "$TF_PROJECT_NAME/$file".tf; done

Provisioner Configuration

We start with configuring the used provides inside versions.tf. Enter the following content with the most up-to-date version that’s available:

//versions.tf

terraform {

required_providers {

hcloud = {

source = "hetznercloud/hcloud"

version = ">=1.35.2"

}

tls = {

source = "hashicorp/tls"

version = ">=4.0.3"

}

}

}

The provider configuration is slim: We just need to define a variable that will keep the Hetzner cloud access token…

//providers.tf

provider "hcloud" {

token = var.hcloud_token

sensitive = true

}

… and the variable itself is defined in the same-named file.

//variables.tf

variable "hcloud_token" {

sensitive = true

}

Secrets handling in Terraform means to not store them as concrete text because it will end up as plaintext in the state file. Instead, you pass them as environment variables to the terraform commands.

Resources

We will create two different kinds of resources: An SSH key for accessing the servers, and servers with the role of either controller or worker.

SSH Key

To access the machines, we will use a dynamically created SSH key. The resource configuration is this:

//resources.tf

resource "tls_private_key" "generic-ssh-key" {

algorithm = "RSA"

rsa_bits = 4096

}

To use this key for connection to the server via SSH, we will use a local_provisioner, a Terraform abstraction that manifests resource information in files. We will grab the private and public key attributes from the resource and store them in files.

//resources.tf

resource "tls_private_key" "generic-ssh-key" {

//...

provisioner "local-exec" {

command = <<EOF

cat <<< "${tls_private_key.generic-ssh-key.private_key_openssh}" > .ssh/id_rsa.key

cat <<< "${tls_private_key.generic-ssh-key.public_key_openssh}" > .ssh/id_rsa.key

chmod 400 .ssh/id_rsa.key

chmod 400 .ssh/id_rsa.key

EOF

}

}

Server Variables

In Terraform, you can write resource definitions in plain text, or invoke functions that iterate through a list of items and use their values. My project uses the latter to be more flexible and handling any number of controller or worker nodes.

We need variables that define the list of controller and workers, and we need an object that holds the server’s configuration like server size and OS image.

// variables.tf

variable "server_config" {

default = ({

controller_server_type = "cx21"

worker_server_type = "cpx21"

image = "debian-11"

k8s_controller_instances = ["controller"]

k8s_worker_instances = ["worker1", "worker2"]

})

}

Server Resources

I like to think of the following resource definitions as generators: They consume variables iteratively.

The controller is this:

resource "hcloud_server" "controller" {

for_each = toset(var.server_config.k8s_controller_instances)

name = each.key

server_type = var.cloud_server_meta_config.server_type.controller

image = var.cloud_server_meta_config.image

location = "nbg1"

ssh_keys = [hcloud_ssh_key.primary-ssh-key.name]

}

And for the workers:

resource "hcloud_server" "worker" {

for_each = toset(var.server_config.worker_instances)

name = each.key

server_type = var.server_config.worker_server_type

image = var.server_config.image

location = "fsn1"

ssh_keys = [hcloud_ssh_key.primary-ssh-key.name]

}

Kubeadm Automation: Controller

Up to this point, the above declarations will merely provision the infrastructure. In order to install kubeadm on them, we need to run shell scripts.

In Terraform, there is the concept of a provisioner, an additional logic that is involved during the creation of resources. We will use a remote_provisoner to copy installation scripts to the servers. This provisioner will use the SSH key that we created earlier.

The configuration inside the controller is this:

resource "hcloud_server" "controller" {

//...

connection {

type = "ssh"

user = "root"

private_key = tls_private_key.generic-ssh-key.private_key_openssh

host = self.ipv4_address

}

provisioner "remote-exec" {

scripts = [

"./bin/01_install.sh",

"./bin/02_kubeadm_init.sh"

]

}

}

The two scripts will install all necessary packages and then start the kubeadm cluster initialization. The content of these scripts is derived from the manual installation tutorial, which will be published later.

When the controller is provisioned, we need to get the kubeadm join command from it. This includes dynamically created join tokens. With the local-exec provider, we create a shell script inside this project, then connect to the controller via SSH, grab the join command, and feed it into the shell script. Here is how:

resource "hcloud_server" "controller" {

//...

provisioner "local-exec" {

command = <<EOF

rm -rvf ./bin/03_kubeadm_join.sh

echo "echo 1 > /proc/sys/net/ipv4/ip_forward" > ./bin/03_kubeadm_join.sh

ssh root@${self.ipv4_address} -o StrictHostKeyChecking=no -i .ssh/id_rsa.key "kubeadm token create --print-join-command" >> ./bin/03_kubeadm_join.sh

EOF

}

Kubeadm Automation: Worker

The workers are provisioned in a very similar way: We copy and execute the installation script and the dynamically created kubeadm join shell script. Furthermore, since the workers need to wait for the controller to be fully provisioned, we add a depends_on relationship.

The complete configuration is this:

resource "hcloud_server" "worker" {

//...

depends_on = [

hcloud_server.controller

]

connection {

type = "ssh"

user = "root"

private_key = tls_private_key.generic-ssh-key.private_key_openssh

host = self.ipv4_address

}

provisioner "remote-exec" {

scripts = [

"./bin/01_install.sh",

"./bin/03_kubeadm_join.sh"

]

}

}

Cluster Provisioning

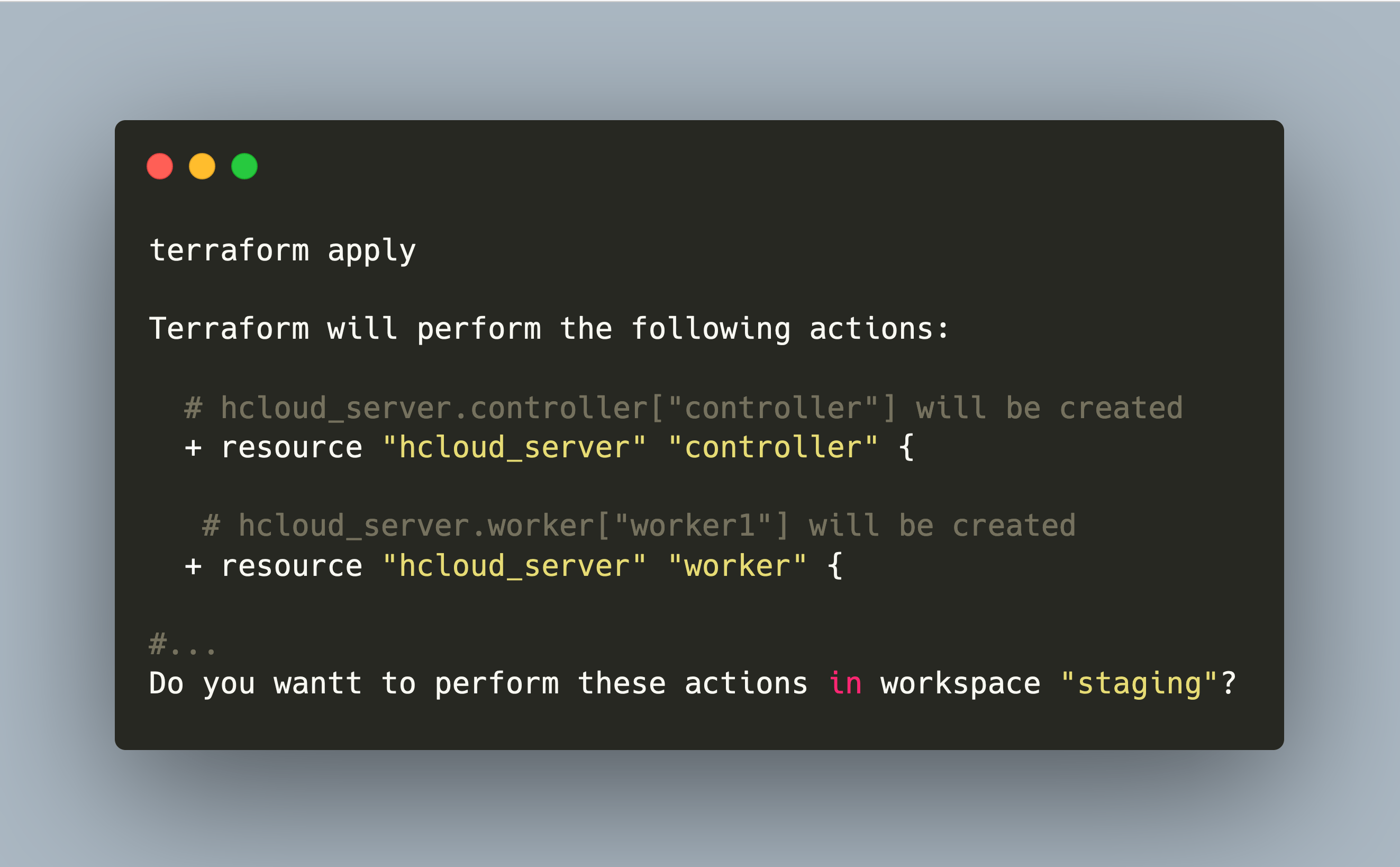

All Terraform resources are ready. Now we can start the provisioning.

export TF_VAR_hcloud_token=SECRET

terraform apply

#...

Terraform will perform the following actions:

# hcloud_server.controller["controller"] will be created

+ resource "hcloud_server" "controller" {

# hcloud_server.worker["worker1"] will be created

+ resource "hcloud_server" "worker" {

#...

Do you want to perform these actions in workspace "staging"?

Terraform will perform the actions described above.

Only 'yes' will be accepted to approve.

Enter a value: yes

tls_private_key.generic-ssh-key: Creating...

tls_private_key.generic-ssh-key: Provisioning with 'local-exec'...

tls_private_key.generic-ssh-key (local-exec): (output suppressed due to sensitive value in config)

tls_private_key.generic-ssh-key: Creation complete after 2s [id=763061a58dc3b7c5b9c09b76e4ddbf4cdb52c3ab]

hcloud_ssh_key.primary-ssh-key: Creating...

hcloud_ssh_key.primary-ssh-key: Creation complete after 2s [id=8482290]

hcloud_server.controller["controller"]: Creating...

hcloud_server.controller["controller"]: Still creating... [10s elapsed]

hcloud_server.controller["controller"]: Provisioning with 'remote-exec'...

hcloud_server.controller["controller"] (remote-exec): Connecting to remote host via SSH...

#...

Run terraform show to see the controllers IP address, then grab the kubeconfig file via SSH:

ssh root@${CONTROLLER_IP} -o StrictHostKeyChecking=no -i .ssh/id_rsa.key "cat /root/.kube/config"

And with this, you can operate the cluster with kubectl:

kubectl get nodes

NAME STATUS ROLES AGE VERSION

controller-staging Ready control-plane,master 22m v1.23.11

worker1-staging Ready <none> 49s v1.23.11

worker2-staging Ready <none> 5m51s v1.23.11

Conclusion

This article showed how to use Terraform for provisioning a kubeadm cluster. We started with the configuration of the Terraform provisioners, then created a variable that holds the cluster configuration, and continued with the resource definition for the controller and worker. When this Terraform project runs, it creates the controller first, grabs its kubeadm join command, provisions the worker and joins them into a cluster. After about 2 minutes you have your full available cluster. Copy the kubeconfig to your machine and start working with your cluster.