The Kubespray distribution brings the power of Ansible for configuration, setup, and maintenance of a Kubernetes cluster. Starting from an inventory file, you define which nodes are part of the cluster and which role they should play. Then, additional configuration files fine-tune the settings of the various Kubernetes components. By applying the playbook - Ansible jargon for the installation/setup scripts that consume your configuration - the desired state is manifested on the target server. Using Kubespray means to manifest your cluster as true Infrastructure as code: All subsequent runs lead to the very same desired state.

This article is a hands-on tutorial to install a 3 node Kubernetes cluster with a single controller node. We start with the prerequisite setup of the nodes, then install the control plane, and add worker nodes. Finally, we will also see how to upgrade the cluster.

Prerequisites

To follow along, you need this:

- 3 server with a compatible OS

- Regarding hardware resources, controller nodes need at least 1.5 GB RAM, worker nodes 1GB of RAM

- SSH access to these servers

Just as before, I will do the installation on my preferred cloud environment at Hetzner. The hardware configuration is this:

- 1x CX11 node (1 Intel CPU, 2GB RAM, 40GB SSD)

- 2x CX21 nodes (2 Intel CPU, 4GB RAM, 40GB SSD)

Configure Kubespray

1. Kubespray Controller Setup

The Kubespray controller is a machine on which you will install the Kubespray environment and from which you will have access to all the servers which are going to be a part of your cluster. Preferably, this controller is not also part of your cluster.

On this controller, following the official documentation, we will clone the Kubespray repo and install ansible first in a Python virtual environment - a separated workspace, similar to a container, that encapsulates all required software versions into one.

Run the following commands:

git clone --depth=1 https://github.com/kubernetes-sigs/kubespray.git

KUBESPRAYDIR=$(pwd)/kubespray

VENVDIR="$KUBESPRAYDIR/.venv"

ANSIBLE_VERSION=2.12

virtualenv --python=$(which python3) $VENVDIR

source $VENVDIR/bin/activate

cd $KUBESPRAYDIR

pip install -U -r requirements-$ANSIBLE_VERSION.txt

You will see a long list of downloaded and installed packages:

Collecting ansible==5.7.1

Downloading ansible-5.7.1.tar.gz (35.7 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 35.7/35.7 MB 8.6 MB/s eta 0:00:00

Preparing metadata (setup.py) ... done

Collecting ansible-core==2.12.5

Downloading ansible-core-2.12.5.tar.gz (7.8 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 7.8/7.8 MB 9.6 MB/s eta 0:00:00

Preparing metadata (setup.py) ... done

Collecting cryptography==3.4.8

Downloading cryptography-3.4.8-cp36-abi3-macosx_10_10_x86_64.whl (2.0 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 2.0/2.0 MB 9.7 MB/s eta 0:00:00

...

Successfully built ansible ansible-core

Installing collected packages: ruamel.yaml, resolvelib, pbr, netaddr, jmespath, ruamel.yaml.clib, PyYAML, pyparsing, pycparser, MarkupSafe, packaging, jinja2, cffi, cryptography, ansible-core, ansible

When you see the success message, we can continue to define the inventory.

2. Creating the Inventory

The very first step when using Kubespray is to define the inventory - a core concept of Ansible, which is a list of servers, with hostnames or IP address, and the role they have on your setup.

First, copy the sample inventory definitions from the repo…

cp -rfp inventory/sample inventory/mycluster

… then bootstrap the inventory file details by running a command that lists all the IPs of your server (from controller to workers):

declare -a IPS=(167.235.49.54 159.69.35.153 167.235.73.16)

CONFIG_FILE=inventory/mycluster/hosts.yml python3 contrib/inventory_builder/inventory.py ${IPS[@]}

This command will name the nodes linearly as node1, node2, and node3. For a one controller node setup, edit the created file inventory/mycluster/hosts.yml file, change the name of the first node to controller, rename other nodes, and only use controller in the kube_control_plane group.

My final inventory file than looked like this:

all:

hosts:

controller:

ansible_host: 167.235.49.54

ip: 167.235.49.54

access_ip: 167.235.49.54

node1:

ansible_host: 159.69.35.153

ip: 159.69.35.153

access_ip: 159.69.35.153

node2:

ansible_host: 167.235.73.16

ip: 167.235.73.16

access_ip: 167.235.73.16

children:

kube_control_plane:

hosts:

controller:

kube_node:

hosts:

controller:

node1:

node2:

etcd:

hosts:

controller:

node1:

node2:

k8s_cluster:

children:

kube_control_plane:

kube_node:

calico_rr:

hosts: {}

This basic inventory file has a lot more options: You can also use it to manage an etcd server, and much more. Check the official documentation for more information.

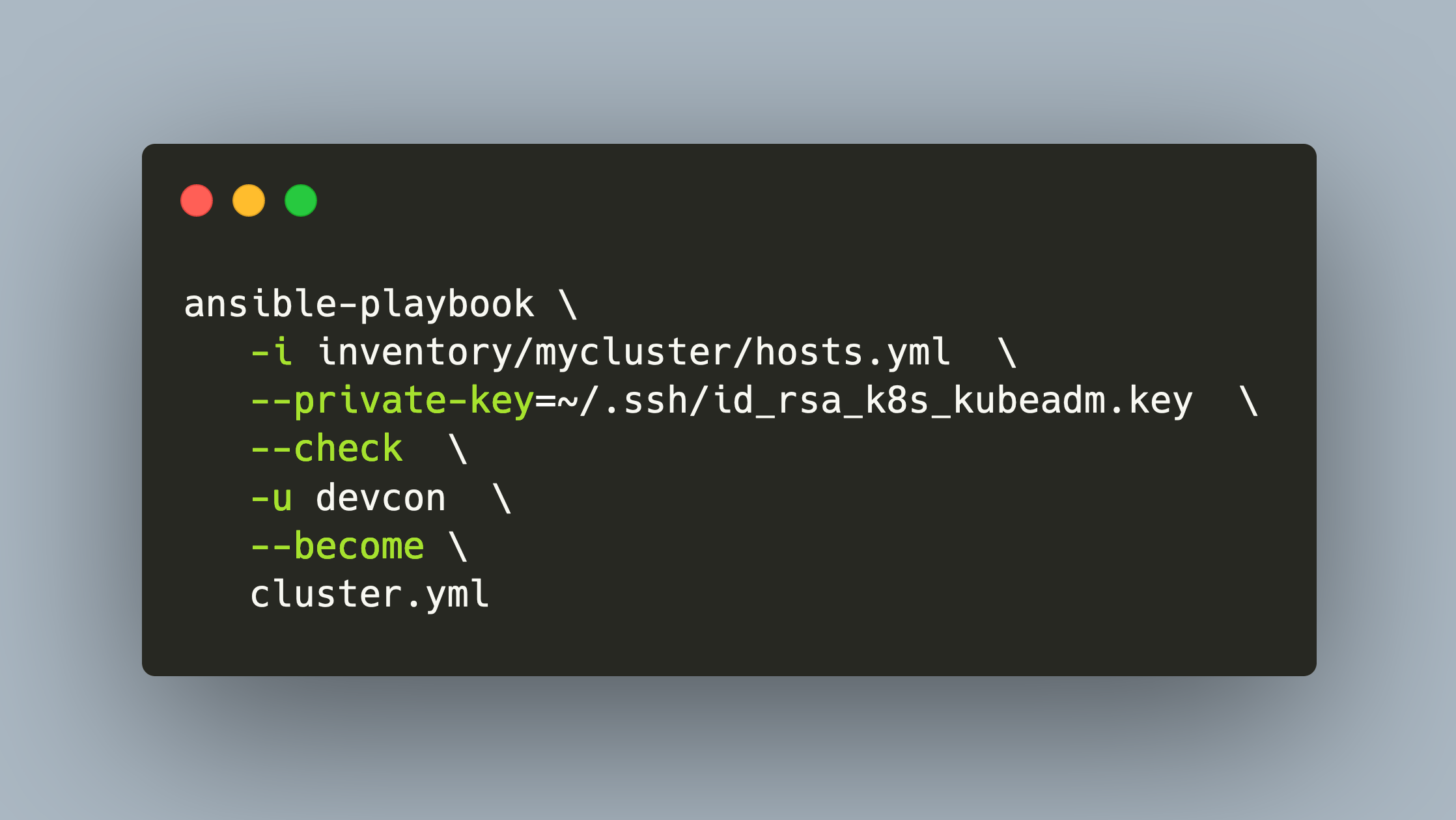

3. Test Run

Lets run the installation script in check mode, which will only print the changes, not apply them directly. Use the -u user flag to define the name of the user that is used for the SSH connection, and then this user needs to become a root user.

ansible-playbook \

-i inventory/mycluster/hosts.yml \

--private-key=~/.ssh/id_rsa_k8s_kubeadm.key \

--check \

-u devcon \

--become \

cluster.yml

If you see any error here, there are most luckily related to running in check mode - ignore them for now. At the end of the command, you should see a summary of the tasks:

Wednesday 28 September 2022 20:45:20 +0200 (0:00:01.114) 0:00:53.828 ***

===============================================================================

kubernetes/preinstall : Create kubernetes directories ------------------------------------------- 5.06s

kubernetes/preinstall : Remove swapfile from /etc/fstab ----------------------------------------- 2.24s

bootstrap-os : Install dbus for the hostname module --------------------------------------------- 2.01s

Gather necessary facts (hardware) --------------------------------------------------------------- 1.90s

bootstrap-os : Assign inventory name to unconfigured hostnames (non-CoreOS, non-Flatcar, Suse and ClearLinux, non-Fedora) --- 1.88s

Gather necessary facts (network) ---------------------------------------------------------------- 1.82s

bootstrap-os : Gather host facts to get ansible_os_family --------------------------------------- 1.81s

Gather minimal facts ---------------------------------------------------------------------------- 1.42s

adduser : User | Create User -------------------------------------------------------------------- 1.33s

kubernetes/preinstall : Ensure ping package ----------------------------------------------------- 1.33s

bootstrap-os : Create remote_tmp for it is used by another module ------------------------------- 1.33s

bootstrap-os : Ensure bash_completion.d folder exists ------------------------------------------- 1.32s

kubernetes/preinstall : set is_fedora_coreos ---------------------------------------------------- 1.29s

kubernetes/preinstall : Create other directories ------------------------------------------------ 1.29s

kubernetes/preinstall : check if booted with ostree --------------------------------------------- 1.23s

adduser : User | Create User Group -------------------------------------------------------------- 1.23s

kubernetes/preinstall : check swap -------------------------------------------------------------- 1.22s

kubernetes/preinstall : Check if kubernetes kubeadm compat cert dir exists ---------------------- 1.18s

kubernetes/preinstall : get content of /etc/resolv.conf ----------------------------------------- 1.16s

kubernetes/preinstall : check status of /etc/resolv.conf ---------------------------------------- 1.16s

4. Optionally: Kubernetes Component Configuration

In my installation attempt, I did not change any configuration. Ansible playbooks can be configured through the configuration files inventory/mycluster/group_vars/all/all.yml and inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml.

Cluster Installation and Troubleshooting

The cluster installation is started by executing the ansible playbook cluster.yaml and applying required command line flags. It looks like this:

ansible-playbook \

-i inventory/mycluster/hosts.yml \

--private-key=~/.ssh/id_rsa_k8s_kubeadm.key \

-u root \

--become \

cluster.yml

The installation was tricky - I needed several attempts to get it working. Basically, I proceeded by running the command with the -vvv flag, and then check the Ansible tasks and configuration.

The most persistent error was about the Calico CNI. The error message looks like this:

fatal: [controller]: FAILED! => {"changed": true, "cmd": "/usr/local/bin/calicoctl.sh replace -f-", "delta": "0:00:00.054945", "end": "2022-09-29 19:26:13.445232", "msg": "non-zero return code", "rc": 1, "start": "2022-09-29 19:26:13.390287", "stderr": "Hit error: error with field RouteReflectorClusterID = '1' (Reason: failed to validate Field: RouteReflectorClusterID because of Tag: ipv4 )", "stderr_lines": ["Hit error: error with field RouteReflectorClusterID = '1' (Reason: failed to validate Field: RouteReflectorClusterID because of Tag: ipv4 )"], "stdout": "Partial success: replaced the first 1 out of 1 'Node' resources:", "stdout_lines": ["Partial success: replaced the first 1 out of 1 'Node' resources:"]}

The CNI Calico could not be installed. After several attempts, I needed to define two variables in kubespray/extra_playbooks/inventory/mycluster/group_vars/all/all.yml, and, I also needed to change a task definition in roles/network_plugin/calico/rr/tasks/update-node.yml to use the route_reflector_cluster_id:

Now the installation was successful.

PLAY RECAP ***********************************************************************************************************************

localhost : ok=3 changed=0 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

controller : ok=709 changed=33 unreachable=0 failed=0 skipped=1243 rescued=0 ignored=4

node2 : ok=507 changed=6 unreachable=0 failed=0 skipped=770 rescued=0 ignored=2

node3 : ok=507 changed=6 unreachable=0 failed=0 skipped=770 rescued=0 ignored=2

Let’s see how to access the cluster.

Access your Kubernetes Cluster (and Troubleshooting)

To work with your cluster, you need the kubectl binary, ideally with the very same version, and the kubeconfig file. A Kubespray installation puts these on the controller nodes. So, you can perform a SSH login to your cluster and work from there, or use your kubespray controller on which you manually install the kubectl binary.

Let’s see some information about the cluster and its nodes. To my surprise, several errors occurred: the worker nodes were not ready, the coredns deployment could not schedule a pod, and the calico pods were crashing.

$> kubectlubectl get nodes

NAME STATUS ROLES AGE VERSION

controller Ready control-plane 37h v1.24.6

worker1 NotReady <none> 37h v1.24.6

worker2 NotReady <none> 37h v1.24.6

$> kubectlubectl get deploy -A

NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE

kube-system coredns 1/2 2 1 22h

kube-system dns-autoscaler 1/1 1 1 22h

Again, I needed some troubleshooting attempts, and for the same reasons, I will also document them.

Error: Worker Nodes not ready

Looking at the Kubernetes log files, I could see this:

Oct 01 08:44:53 worker1 kubelet[1176]: I1001 08:44:53.877700 1176 reconciler.go:254] "operationExecutor.MountVolume started for volume \"etc-nginx\" (UniqueName: \"kubernetes.io/host-path/9e50e3cca2a34ce5d8f21c4508ba5796-etc-nginx\") pod \"nginx-proxy-node2\" (UID: \"9e50e3cca2a34ce5d8f21c4508ba5796\") " pod="kube-system/nginx-proxy-node2"

Oct 01 08:44:53 worker1 kubelet[1176]: I1001 08:44:53.877928 1176 operation_generator.go:703] "MountVolume.SetUp succeeded for volume \"etc-nginx\" (UniqueName: \"kubernetes.io/host-path/9e50e3cca2a34ce5d8f21c4508ba5796-etc-nginx\") pod \"nginx-proxy-node2\" (UID: \"9e50e3cca2a34ce5d8f21c4508ba5796\") " pod="kube-system/nginx-proxy-node2"

Oct 01 08:44:53 worker1 kubelet[1176]: E1001 08:44:53.965399 1176 kubelet.go:2424] "Error getting node" err="node \"node2\" not found"

I needed to remove and add the nodes again, following the documentation:

- run the remove-node playbook, passing the node names

- on the controller node, run

kubectl delete node - confirm that

kubectl get nodesonly shows the controller node - update

hosts.yamlwith the new and correct hostname - run the

cluster.ymlplaybook again

After this, the above error message vanished.

Erro: CoreDNS Pod not scheduled

Let’s check why a coredns pod cannot be scheduled. Looking at the pods, the nominated node for coredns is <none> - thats strange.

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

calico-node-7g8dh 0/1 Init:CrashLoopBackOff 10 (22s ago) 27m 159.69.35.153 worker1 <none> <none>

calico-node-bcwhh 0/1 Init:CrashLoopBackOff 14 (47s ago) 27m 167.235.73.16 worker2 <none> <none>

calico-node-mprfp 1/1 Running 1 (120m ago) 40h 167.235.49.54 controller <none> <none>

coredns-74d6c5659f-c7ddd 0/1 Pending 0 25h <none> <none> <none> <none>

coredns-74d6c5659f-wjl7q 1/1 Running 1 (120m ago) 25h 10.233.97.132 controller <none> <none>

I scaled the deployment to 1 replica, then it worked.

Error: Caclico Pods keep crashing

Finally, let’s check why the calico Pods keep crashing, peeking into the log files:

a. calico-node consists of three init containers and a main container

$> kubectl logs calico-node-7g8dh

Defaulted container "calico-node" out of: calico-node, upgrade-ipam (init), install-cni (init), flexvol-driver (init)

Error from server (BadRequest): container "calico-node" in pod "calico-node-7g8dh" is waiting to start: PodInitializing

b. check the main container - its waiting to be created

$> kubectl logs calico-node-7g8dh -c calico-node

Error from server (BadRequest): container "calico-node" in pod "calico-node-7g8dh" is waiting to start: PodInitializing

c. check the upgrade-ipam init container - it executes correctly

$> kubectl logs calico-node-7g8dh -c upgrade-ipam

2022-10-01 10:53:43.347 [INFO][1] ipam_plugin.go 75: migrating from host-local to calico-ipam...

2022-10-01 10:53:43.349 [INFO][1] migrate.go 67: checking host-local IPAM data dir dir existence...

2022-10-01 10:53:43.349 [INFO][1] migrate.go 69: host-local IPAM data dir dir not found; no migration necessary, successfully exiting...

2022-10-01 10:53:43.349 [INFO][1] ipam_plugin.go 105: migration from host-local to calico-ipam complete node="worker1"

d. check the install-cni init container - it complains about a configuration error

$> kubectl logs calico-node-7g8dh -c install-cni

time="2022-10-01T11:27:59Z" level=info msg="Running as a Kubernetes pod" source="install.go:140"

2022-10-01 11:27:59.951 [INFO][1] cni-installer/<nil> <nil>: File is already up to date, skipping file="/host/opt/cni/bin/bandwidth"

#...

2022-10-01 11:28:00.215 [INFO][1] cni-installer/<nil> <nil>: Wrote Calico CNI binaries to /host/opt/cni/bin

2022-10-01 11:28:00.298 [INFO][1] cni-installer/<nil> <nil>: CNI plugin version: v3.23.3

2022-10-01 11:28:00.299 [INFO][1] cni-installer/<nil> <nil>: /host/secondary-bin-dir is not writeable, skipping

W1001 11:28:00.299160 1 client_config.go:617] Neither --kubeconfig nor --controller was specified. Using the inClusterConfig. This might not work.

time="2022-10-01T11:28:00Z" level=info msg="Using CNI config template from CNI_NETWORK_CONFIG_FILE" source="install.go:335"

time="2022-10-01T11:28:00Z" level=fatal msg="open /host/etc/cni/net.d/calico.conflist.template: no such file or directory" source="install.go:339"

e. to be sure, check the last init container flexvol-driver: Its als in pending state

$> kubectl logs calico-node-7g8dh -c flexvol-driver

Error from server (BadRequest): container "flexvol-driver" in pod "calico-node-7g8dh" is waiting to start: PodInitializing

Ok, the init container of the calico pod does not start because of a misconfiguration. Let’s see how this pod is started.

install-cni:

Image: quay.io/calico/cni:v3.23.3

Port: <none>

Host Port: <none>

Command:

/opt/cni/bin/install

Environment Variables from:

kubernetes-services-endpoint ConfigMap Optional: true

Environment:

CNI_CONF_NAME: 10-calico.conflist

UPDATE_CNI_BINARIES: true

CNI_NETWORK_CONFIG_FILE: /host/etc/cni/net.d/calico.conflist.template

SLEEP: false

KUBERNETES_NODE_NAME: (v1:spec.nodeName)

Mounts:

/host/etc/cni/net.d from cni-net-dir (rw)

/host/opt/cni/bin from cni-bin-dir (rw)

flexvol-driver:

..

Volumes:

cni-net-dir:

Type: HostPath (bare host directory volume)

Path: /etc/cni/net.d

HostPathType:

cni-bin-dir:

Type: HostPath (bare host directory volume)

Path: /opt/cni/bin

HostPathType:

So, on the worker nodes, there is no file at /etc/cni/net.d/calico.conflist.template - but it’s on the controller. Let’s just copy it to the controller nodes, and then the Calico pods could be started.

calico-node-7g8dh 1/1 Running 0 48m 159.69.35.153 worker1 <none> <none>

calico-node-bcwhh 1/1 Running 0 48m 167.235.73.16 worker2 <none> <none>

calico-node-mprfp 1/1 Running 1 (141m ago) 40h 167.235.49.54 controller <none> <none>

The Cluster works

And with this, the cluster is finally ready.

NAME STATUS ROLES AGE VERSION

controller Ready control-plane 40h v1.24.6

worker1 Ready <none> 48m v1.24.6

worker2 Ready <none> 48m v1.24.6

This was another extensive troubleshooting lesson, but I’m happy that it finally worked.

Upgrade the Kubernetes Version

Upgrading the cluster can be done on two ways. The unsafe method basically installs new versions of the Kubernetes component binaries on the server and restarts them. This can disrupt your cluster when e.g. a misconfigured component does not start anymore. The other way is to upgrade nodes step-by-step, and to include a node drain operation to move workloads to other nodes. In both cases, a manual step that you need to do is to update deprecated API versions as before.

Let’s upgrade the nodes the safe way, and start with the controller node. We will pass the parameter upgrade_node_post_upgrade_confirm so that the node will be cordoned before the upgrade according to this task definition.

Run the following commands:

ansible-playbook \

-i inventory/mycluster/hosts.yml \

--private-key=~/.ssh/id_rsa_k8s_kubeadm.key \

-u root \

--become \

--force \

-v \

-e kube_version=v1.25.2 \

--extra-vars "upgrade_node_post_upgrade_confirm=true" \

--limit "kube_control_plane" \

upgrade-cluster.yml

However, as before, I encountered errors. I could find a related open Github issue. At this point, I stopped to try an upgrade, and concluded using Kubespray.

Conclusion

The Kubespray distribution offers complete configuration of a Kubernetes cluster with the power of Ansible. Starting from the server, continuing with the Kubernetes components, and ending at their individual configuration: Everything can be controlled as versioned infrastructure as code. This article showed you how to use Kubespray to set up a one controller, two worker nodes cluster. You learned about the Ansible base installation, the necessary steps so define the inventory, and finally how to install the cluster. However, my experience with Kubespray was tainted by extensive troubleshooting: First, to get the installation complete, I needed to set specific options in the Ansible configuration. Second, in the cluster, the worker nodes were not ready. Drilling into the Kubernetes log files and pod logs, I could trace one error to a missing configuration file that was present on the controller, but not in the worker. And third, I could not perform an update of the Kubernetes version.