When using Large Language Models (LLMs) via an API or locally, a quasi-standard for representing the chat history is recognizable: A list of messages, and each message denominates the speaker and the actual content. This format is provided by any OpenAI API compatible LLM engine, and it is also used internally by tools that provide a CLI-like invocation, for example AutoGen.

Leveraging this format, the focus of this article is to implement a custom Python GUI with the help of the Gradio framework. The GUI enables to select the concrete LLM model, its system prompt and temperature, as well as to start a history-aware conversation. You will learn about the essential GUI abstractions, how to implement session-persistent objects, and the intricacies of data formats.

The technical context of this article is Python v3.11, gradio v4.28.3, as well as ollama v0.1.7 and autogen v0.2.27 for running LLMs locally. All code examples should work with newer library versions too, but some code changes might be needed.

Motivation: Designing a GUI from Scratch

An well-informed reader might raise the question why to design a GUI when several tools already exists, such as the open-webui and Mysty for visualizing any OpenAI API compatible LLM, or projects supporting agents and local tools like MemGPT, LLM Studio or Autogen Studio.

The main motivation for this article is to explore GUI design for LLMs employed as agents and provide them with configurable access to local data sources, such as collections of ebooks or time-series databases with IOT sensor information. I want to experience the intricacies of data format and system interface from scratch to design a personal agent with access to my data, ensuring that no other third party is involved.

My first attempt to design this GUI was based on Streamlit. The GUI creation worked flawless, but I hit an essential limitation: Streamlit apps reload their code on every interaction, which triggers the recreation of LLM objects too, removing the chat history in the process. Although it is possible to define global stateful objects, the framework relies internally on serialization with the pickle data format. This could not be applied to an autogen agent. Therefore, I could not continue with Streamlit.

The second attempt is based on Gradio. In this framework, objects globally defined persist when GUI components change or when the browser tab is refreshed. Therefore, Gradio is the GUI design of choice.

GUI Layout

Three distinct parts are shown:

- A box for model configuration (model name, temperature, and system prompt)

- A box showing the conversation history

- The chat dialogue interface, including chat bubbles, and input field for new messages, and buttons to retry or reset an interaction.

Each of the following section highlights the necessary development steps.

Model Configuration Box

In Gradio, layouts are structured with the help of blocks, rows, and columns. Blocks are a high level component that serves as a container, providing the launch() method which starts a local webserver that serves the page. And inside a box, rows define horizontal and columns define vertical slices in which other components are automatically arranged.

The principal layout is to stack the configuration and history next to each other, as a row with two columns, followed by a single row with the chat interface. The implementation starts with this:

with gr.Blocks() as gui:

with gr.Row() as config:

with gr.Column():

## model config

with gr.Column():

## history

with gr.Row() as chat:

## chat interface

To configure the model, a radio box for model names, a slider for the temperature, and a textbox for the system prompt are rendered. The state of these components refers to global defined variables. Here is the code:

MODEL_LIST = ["llama3", "starling-lm", "qwen"]

TEMPERATURE = 0.0

SYSTEM_PROMPT = """

You are a knowledgeable librarian that answers question from your supervisor.

# ...

"""

model = gr.Radio(MODEL_LIST, value="llama3",label="Model")

temperature = gr.Slider(TEMPERATURE, 1.0, value=0.0, label="Temperature")

system_prompt = gr.Textbox(SYSTEM_PROMPT, label="System Prompt")

In order to actually change the model, an Interface component is added. This component is passed a function name and the field names, and will then render a “Clear” and “Submit” button. The submit button invokes the method with the defined inputs, which will then update the global state.

The update method is this:

def configure(m: str, t: float, s: str):

global MODEL, TEMPERATURE, SYSTEM_PROMPT

if MODEL != m or TEMPERATURE != t or SYSTEM_PROMPT != s:

MODEL, TEMPERATURE, SYSTEM_PROMPT = m, t, s

MODEL = 'qwen:1.8b' if MODEL == 'qwen' else MODEL

load_model()

return MODEL, TEMPERATURE, SYSTEM_PROMPT

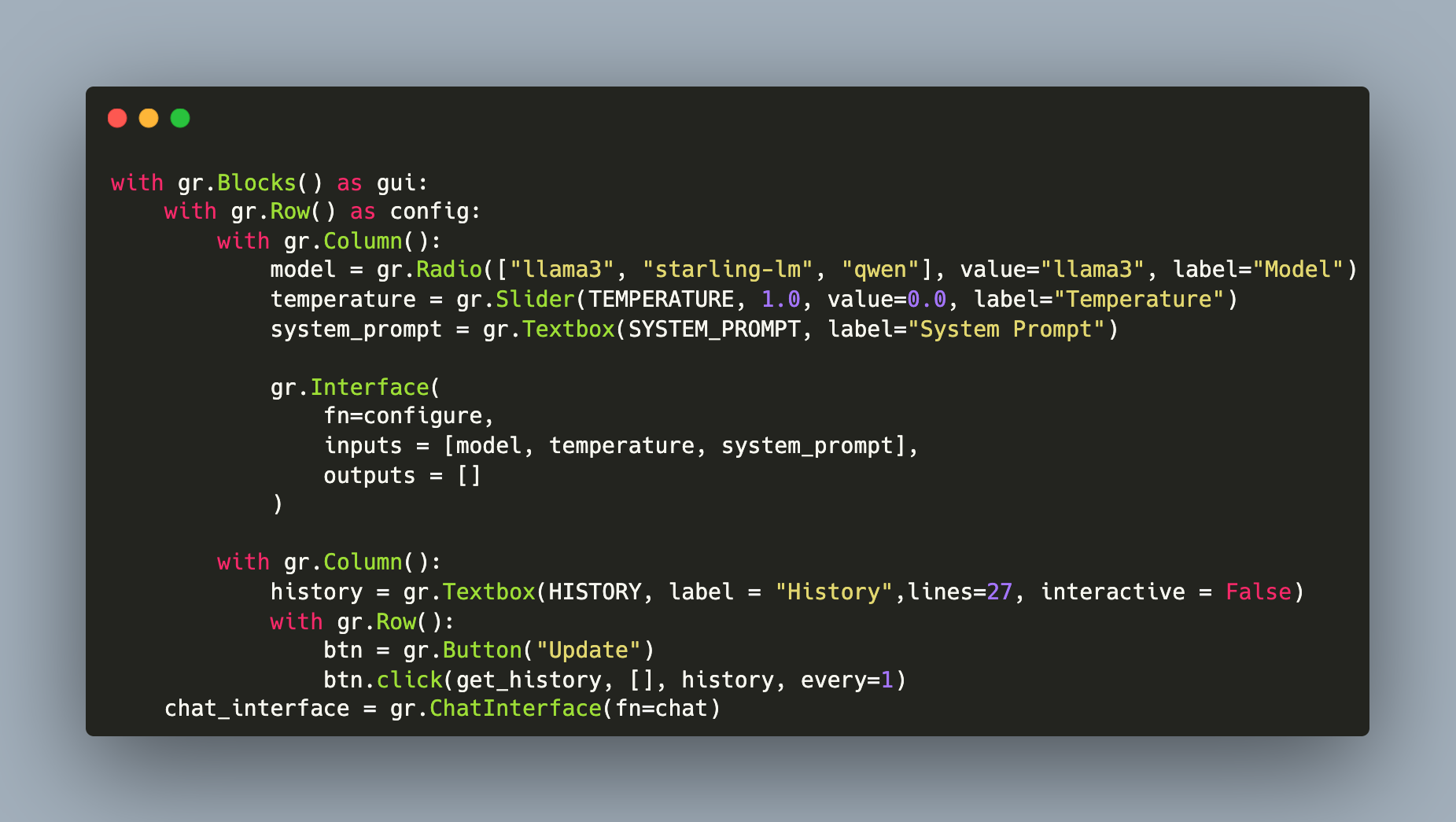

And the interface component that ties everything together is this:

gr.Interface(

fn=configure,

inputs = [model, temperature, system_prompt],

outputs = []

)

This was the hardest part to design. The essential abstraction of GUI components, global state variables, and functions that are triggered through UI interactions to modify the state are the essential building blocks that form the rest of the interface as well.

Chat History Box

The history box is a non-editable text field. It renders the global “HISTORY” variable in a continuous, self-triggering loop once a button was clicked.

Its implementation follows the same explained building patterns. First the global state and the update method:

HISTORY = []

def get_history():

global HISTORY

return HISTORY

Then the component definition:

with gr.Column():

history = gr.Textbox(HISTORY, label = "History",lines=27, interactive = False)

with gr.Row():

btn = gr.Button("Update")

btn.click(get_history, [], history, every=1)

Chat Invocation and Visualization

Just very recently, Gradio added a self-contained chat interface component that includes all required UI components and their interactions out of the box. And in addition, there are plenty configuration options, including additional input component as explained in the ChatInterface documentation.

We can stick with the defaults though and just need a single line:

chat_interface = gr.ChatInterface(fn=chat)

The main implementation effort lies in the chat method. The convention is to pass the user message and an internal history object (a list of question-answer pairs) to this method, and return a string with the LLMs answer. Let’s tackle these points step-by-step.

Global LLM Engine Objects

Following my earlier article about autogen agents and Ollama, this project uses the same configuration. Globally, an agent and a user are created, and the users initiate_chat method invokes. This conversation persists after the Gradio app launched, which makes the complete interaction history-aware.

The essential (and abbreviated code) is this:

AGENT = None

USER = None

def load_model():

global AGENT, user

AGENT = GradioAssistantAgent(

name="librarian",

system_message=SYSTEM_PROMPT,

###

)

USER = GradioUserProxyAgent(

name="supervisor",

##

)

USER.initiate_chat(

AGENT,

message="Please echo the system prompt.",

clear_history=False,

##

)

USER.stop_reply_at_receive(AGENT)

A small tweak is necessary: Empty messages returned by the agent need to be captured. Therefore, seperate classes are defined and the concrete agent and user objects are derived from it.

class GradioAssistantAgent(autogen.AssistantAgent):

def _process_received_message(self, message, sender, silent):

if not message == "":

return super()._process_received_message(message, sender, silent)

class GradioUserProxyAgent(autogen.UserProxyAgent):

def _process_received_message(self, message, sender, silent):

if not message == "":

return super()._process_received_message(message, sender, silent)

Engine Invocation

With these global objects defined, the LLM invocation inside the chat method uses the native Autogen methods. To stay compatible with a potential multi-agent setup too, only the last summarized message will be returned.

The code is as follows:

def chat(message, history):

USER.send(message, recipient=AGENT)

reply = AGENT.last_message()

update_history(message, reply['content'])

yield reply['content']

Dealing With the Chat History

The final piece is to deal with the chat history. Studying the Gradio documentation and examples, I could not figure out how to expose the internal history object that is passed from the chat interface component to the chat object. Therefore, the global HISTORY object contains a copy.

Its implemented with this:

def update_history(message, reply):

global HISTORY

HISTORY.append(message)

HISTORY.append(reply)

Complete Application and Code

The complete application looks like this:

Here is the source code:

/*

* ---------------------------------------

* Copyright (c) Sebastian Günther 2024 |

* |

* devcon@admantium.com |

* |

* SPDX-License-Identifier: BSD-3-Clause |

* ---------------------------------------

*/

import asyncio

import autogen

import time

import random

import numpy as np

import json

from json import JSONDecodeError

import gradio as gr

import os

os.environ["MODEL_NAME"] ="llama3"

os.environ['OAI_CONFIG_LIST'] ='[{"model": "llama3","api_key": "EMPTY", "max_tokens":1000}]'

os.environ["AUTOGEN_USE_DOCKER"] = "false"

# Agent Declarations

class GradioAssistantAgent(autogen.AssistantAgent):

def _process_received_message(self, message, sender, silent):

if not message == "":

return super()._process_received_message(message, sender, silent)

class GradioUserProxyAgent(autogen.UserProxyAgent):

def _process_received_message(self, message, sender, silent):

if not message == "":

return super()._process_received_message(message, sender, silent)

MODEL = "llama3"

MODEL_LIST = ["llama3", "starling-lm", "qwen"]

TEMPERATURE = 0.0

SYSTEM_PROMPT = """

You are a knowledgeable librarian that answers question from your supervisor.

The questions are about books, including details about persons, places, and the story.

Task Details:

- Provide research assistance to on any given topic.

- Conduct a search of our library's databases and save a list of relevant sources.

- Search for the answer in the relevant sources

- Summarize the answer

Resources:

- Project Gutenberg books: https://www.gutenberg.org/ebooks/

Constraints:

- Think step by step

- Be accurate and precise

- Answer briefly, in few words

- Only include sources that you are highly confident of

If you need additional assistance or have questions, ask your supervisor.

"""

HISTORY = []

AGENT = None

USER = None

def load_model():

global AGENT, USER

print("LOADING MODEL")

config_list = [

{

"model": MODEL,

"base_url": "http://127.0.0.1:11434/v1",

"api_key": "ollama",

}

]

system_message = {'role': 'system',

'content': SYSTEM_PROMPT}

AGENT = GradioAssistantAgent(

name="librarian",

system_message=SYSTEM_PROMPT,

llm_config={"config_list": config_list, "timeout": 120, "temperature": TEMPERATURE},

code_execution_config=False,

function_map=None,

)

USER = GradioUserProxyAgent(

name="supervisor",

human_input_mode="NEVER",

max_consecutive_auto_reply=1,

is_termination_msg=lambda x: x.get("content", "").strip().endswith("TERMINATE"),

)

USER.initiate_chat(

AGENT,

message="Please echo the system prompt.",

clear_history=False,

max_consecutive_auto_reply=5,

is_termination_msg=lambda x: x.get("content", "").strip().endswith("TERMINATE"),

)

USER.stop_reply_at_receive(AGENT)

## Gradio

print("SOURCING")

def configure(m: str, t: float, s: str):

global MODEL, TEMPERATURE, SYSTEM_PROMPT

if MODEL != m or TEMPERATURE != t or SYSTEM_PROMPT != s:

MODEL, TEMPERATURE, SYSTEM_PROMPT = m, t, s

MODEL = 'qwen:1.8b' if MODEL == 'qwen' else MODEL

load_model()

return MODEL, TEMPERATURE, SYSTEM_PROMPT

def chat(message, history):

USER.send(message, recipient=AGENT)

reply = AGENT.last_message()

update_history(message, reply['content'])

yield reply['content']

def get_history():

global HISTORY

return HISTORY

def update_history(message, reply):

global HISTORY

HISTORY.append(message)

HISTORY.append(reply)

print("HISTORY")

print(HISTORY)

if __name__ == "__main__":

print("START")

with gr.Blocks() as gui:

with gr.Row() as config:

with gr.Column():

model = gr.Radio(["llama3", "starling-lm", "qwen"], value="llama3", label="Model")

temperature = gr.Slider(TEMPERATURE, 1.0, value=0.0, label="Temperature")

system_prompt = gr.Textbox(SYSTEM_PROMPT, label="System Prompt")

gr.Interface(

fn=configure,

inputs = [model, temperature, system_prompt],

outputs = []

)

with gr.Column():

history = gr.Textbox(HISTORY, label = "History",lines=27, interactive = False)

with gr.Row():

btn = gr.Button("Update")

btn.click(get_history, [], history, every=1)

chat_interface = gr.ChatInterface(fn=chat)

load_model()

gui.launch()

Conclusion

This article explored how to design a custom GUI for running LLMs. Three specific feature sets were presented and implemented. First, the configuration of the model, system prompt and temperature. Second, showing the complete chat history in its native list format. Third, starting and visualizing a continuous chat. You learned how to structure the GUI with the Gradio framework, leveraging its high-level components to create blocks of connected components. You saw how component values are exposed as state inside the block, and how to define global objects that persist the chat history as well as the loaded LLM models.