Nomad gives you several options for persisting data. In this article, I systematically tried docker bind mounts, docker volume mounts and NFS to share some painful lessons learned.

When you run stateful container with Nomad, you need to consider how to persist data between Docker containers that run on different hosts. What you want is this: Independent of where the container runs, it should have access to the same data. This sounds obvious, and yet I had severe trouble finding the right solution. I spend half a day to get either docker volume or docker bind mounts to a shared NFS dir with no success, and then another half day to systematically try all options.

This article is about the journey to achieve data persisting. You will read painful lessons learned and see the working, final solution.

Nomad Docker Bind Mounts

Nomad provides different mounting options at different places in the configuration. The first option is to use host volume mounts: You define a host-specific path which is mapped to any path in the Docker container. You need to provide three config stanzas:

- The Nomad agent config needs a

host_volumeconfig - The job group needs a

volumedeclaration - The tasks needs a

volume_mountdeclaration

Let’s see this setup, assuming we want to mount a volume in which we persists the monitoring data captured by Prometheus.

nomad.conf.hcl

client {

host_volume "prometheus_vol" {

path = "/mnt/nfs/nomad/volumes/prometheus"

read_only = false

}

}

monitoring.job.hcl

group "monitoring" {

volume "prometheus_grp" {

type = "host"

source = "prometheus_vol"

read_only = false

}

task "grafana" {

volume_mount {

volume = "prometheus_vol"

destination = "/prometheus"

readonly = false

}

...

}

Nomad Docker Volume Mounts

The second option is to use Docker volume mounts. Volumes are named, persistent data that resides in the host in the path /var/lib/docker/volumes. When the job is run, Docker will create the volume if it does not exist, or mount it 1. It’s the same as interacting with docker volume create.

>> docker volume ls

DRIVER VOLUME NAME

local 1e9b98423c15f1aa833b131ab9f6c72b0433a212a89b5b75a30424ba917f09d9

local prometheus_grp

>> docker volume inspect prometheus_grp

[

{

"CreatedAt": "2020-03-14T11:54:04Z",

"Driver": "local",

"Labels": null,

"Mountpoint": "/var/lib/docker/volumes/prometheus_grp/_data",

"Name": "prometheus_grp",

"Options": null,

"Scope": "local"

}

]

To create a Nomad Docker volume, you need only one config stanza directly in the docker.config path.

monitoring.job.hcl

task "grafana" {

driver = "docker"

config {

mounts = [

{

type = "volume"

target = "/prometheus"

source = "prometheus"

}

]

}

...

}

Day 1 - Trial & Error

Step 1: Symlink Docker Volumes to NFS

Many Docker documentations recommend to use docker volumes. I thought it would be some specific binary format, but actually it is a normal, accessible dir on your machine:

>> ls -la /var/lib/docker/volumes/prometheus_grp/_data/

total 16

drwxr-xr-x 3 nobody nogroup 4096 Mar 14 11:54 .

drwxr-xr-x 3 root root 4096 Mar 14 11:54 ..

-rw-r--r-- 1 nobody nogroup 0 Mar 14 11:54 lock

-rw-r--r-- 1 nobody nogroup 20001 Mar 14 12:13 queries.active

drwxr-xr-x 2 nobody nogroup 4096 Mar 14 12:13 wal

Therefore, my first try was to set a symlink /var/lib/docker/volumes => /mnt/nfs/docker/volumes. I set the symlink on each machine, and then started spinning up Prometheus on different hosts. It did not work! I saw different data on different hosts. And all existing data was gone!

Here is why: When the Docker container starts on a different machine, and there is no Docker volume with the given name, it will create one. And because of the symlink, it will delete the target dir /mnt/nfs/docker/volumes/prometheus rigorously. This is the default behavior of docker volume create - you don’t get any warning when the target dir already exists!

Can I fix this by creating the Docker volumes manually? I deleted the symlink, deleted all docker volumes on each node, recreated the symlink and created docker volumes on all nodes. Then, I started the container on different nodes again - but Nomad overwrites the existing volume declaration rigorously.

Second Try: Docker NFS Volumes

Ok. Let’s try to create proper Docker NFS volumes. The command I used is this:

docker volume create --driver local \

--opt type=nfs \

--opt o=addr=192.168.2.203,rw \

--opt device=:/mnt/nfs/volumes/test/ nfs-test

When executing the following test command to mount the volume and create a file, I received an error.

docker run -v /mnt/nfs/volumes/test/:/nfs-test busybox touch /nfs-test/hello

docker: Error response from daemon: error while mounting volume '/var/lib/docker/volumes/nfs-test/_data': failed to mount local volume: mount /mnt/nfs/volumes/test:/var/lib/docker/volumes/nfs-test/_data, data: addr=192.168.2.203: invalid argument

For whatever reason, this directory was not writable.

Third Try: Docker Bind Volumes

Ok, if Docker volumes will not work, I will instead mount the shared dir /mnt/nfs/volumes/prometheus into the container. This should work because this dir is available on all nodes. I got this solution to work, but then I was seeing a strange dir access error. Even as the root user, I could not create files inside shared directory, and also, I could not delete them!

This lead me to thinking: Maybe the problems originate from my NFS setup: autofs mounts, and an entry in /etc/fstab to unmount the dir when it is not used.

At this point, I spend 4 hours, and finally gave up for this day.

Day 2 - Systematic Trial & Success

Figuring Out What’s Wrong

What went wrong? The NFS mounts are available on the hosts, and they work. And I could get one Docker container to write to this share. So let’s assume the NFS itself works, but I could not figure out how to tell Nomad to use the network share.

First things first: Lets properly define a docker NFS volume. In a stackoverflow question I saw a Docker compose file for mounting NFS shares. This command uses more optional arguments then the one I have been using, so I adopted its.

I created a docker volume share with this command:

docker volume create

--driver local

--opt type=nfs

--opt "o=nfsvers=4,addr=192.168.2.203,nolock,soft,rw"

--opt device=:/mnt/nfs/volumes/test/ nfs-test

And then I started a docker container, mounted the volume, and put a hello file in the share.

docker run -v /mnt/nfs/volumes/test/:/nfs-test busybox touch /nfs-test/hello

That worked! Now let’s start again and systematically try the different options of mounting volumes inside Nomads job.group.task stanza.

Nomad Named Volume Declaration

On each node, I removed all existing volumes, and then created the NFS volume with the working command from above.

Then, the first try is to use the named volume inside the task.config stanza.

task "grafana" {

driver = "docker"

config {

mounts = [

{

type = "volume"

target = "/prometheus"

source = "prometheus"

}

]

...

}

}

However, the job could not be executed. I saw this error message:

failed to create container: API error (500): failed to mount local volume: mount :/mnt/nfs/docker/prometheus:/var/lib/docker/volumes/prometheus/_data, data: nfsvers=4,addr=192.168.2.203,nolock,soft: no such file or directory

It seems that Nomad is confused with the extra options part of the Docker volume mount. I considered then to use the Docker NFS volume declaration in Nomad, but I could not find any example in the official documentation.

Nomad Bind Mount Declaration

Then I tried a direct bind mount declaration.

task "grafana" {

driver = "docker"

config {

mounts = [

{

type = "bind"

target = "/prometheus"

source = "/mnt/nfs/docker/prometheus"

readonly = false

bind_options {

propagation = "rshared"

}

}

]

...

}

}

The Nomad job executed, and then … something strange happened! The volume prometheus was suddenly an ordinary volume on the node! Nomad overwrites the existing volume - this happened to me on day 1 too! To confirm this, I reapplied the same steps again - and yes, Nomad overwrites the existing docker volume declaration.

Before the job:

>> docker volume inspect prometheus

[

{

"CreatedAt": "2020-03-18T19:15:57Z",

"Driver": "local",

"Labels": {},

"Mountpoint": "/var/lib/docker/volumes/prometheus/_data",

"Name": "prometheus",

"Options": {

"device": ":/mnt/nfs/docker/prometheus",

"o": "nfsvers=4,addr=192.168.2.203,nolock,soft,rw",

"type": "nfs"

},

"Scope": "local"

}

]

After the job:

>> docker volume inspect prometheus

[

{

"CreatedAt": "2020-03-18T19:17:30Z",

"Driver": "local",

"Labels": null,

"Mountpoint": "/var/lib/docker/volumes/prometheus/_data",

"Name": "prometheus",

"Options": null,

"Scope": "local"

}

]

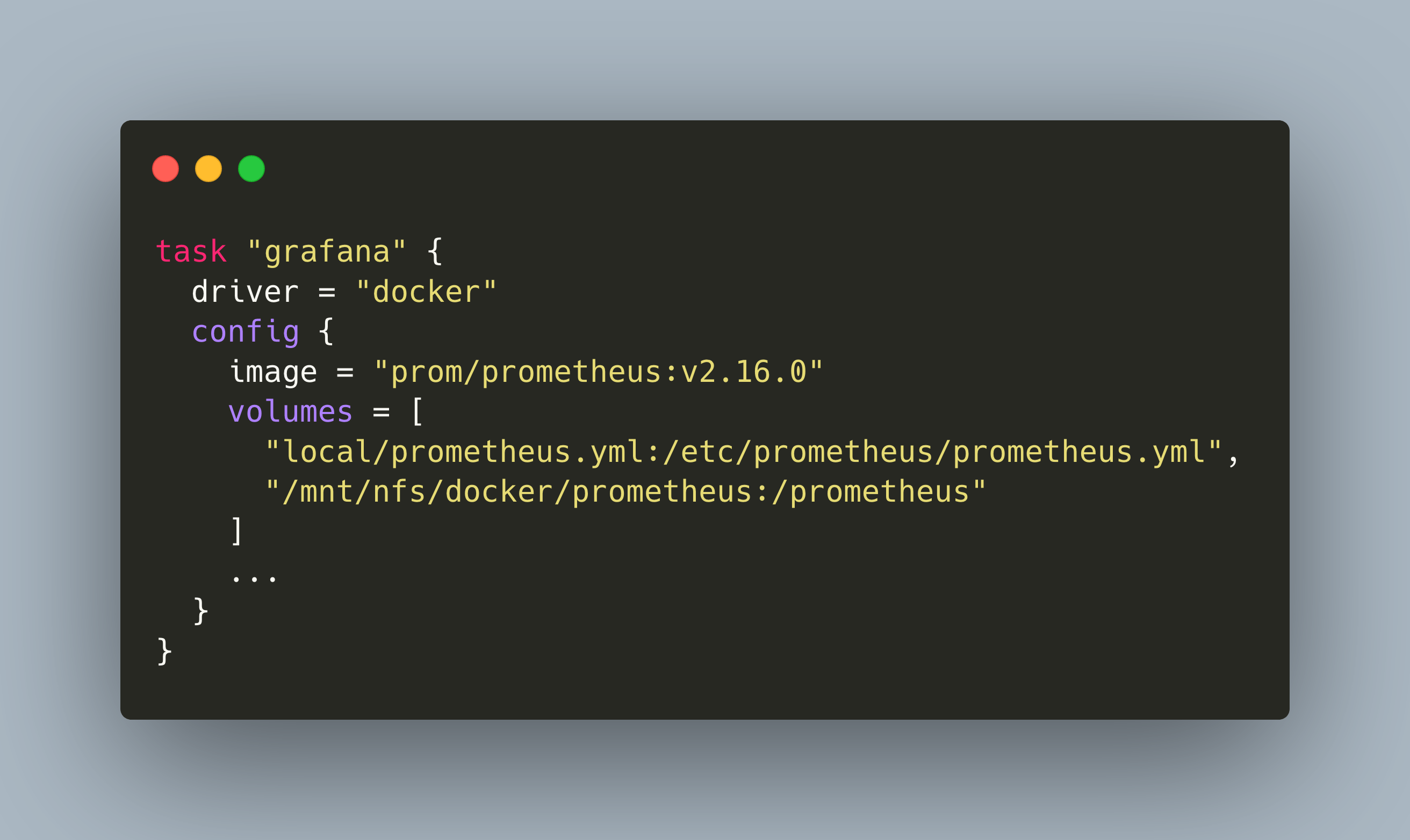

Nomad Volume Declaration

Then I tried this config.volumes declaration:

task "grafana" {

driver = "docker"

config {

image = "prom/prometheus:v2.16.0"

volumes = [

"local/prometheus.yml:/etc/prometheus/prometheus.yml",

"/mnt/nfs/docker/prometheus:/prometheus"

]

...

}

}

I was surprised. The Docker container started. I saw the data streaming. Then, I stopped the container, waited some time, and deployed it on another container. The data was still there! Stopping and restarting the job several times, the NFS mount steadily accumulated data from different nodes.

I got it working finally!

Solution

The final solution for data persisting boils down to:

- Setup nfs share on nodes

- Use a

config.volumesdeclaration with direct mapping between host source path and container destination path

volumes = [

"/path/to/nfs/share:/path/to/docker/destination"

]

No volume declaration needed. No docker volume creation on the host. Just one line per volume in your Nomad job!

Conclusion

When you want to persist data of Docker containers running on different nodes, and have the flexibility to move containers around, then the best solution is to setup an NFS share between the nodes and use direct host to docker mounts. It was hard and painful figuring out how to achieve this. But learning from mistakes can be very educational because you learn about why things are not working. The goal was indeed worth the journey!

Footnotes

-

As I learned later, that’s not correct: It will overwrite the docker volume declaration if it is anything else then a normal volume! If you defined an NFS volume with the same name, it will get overwritten. ↩︎